Are video games good or bad for you? One game certainly did a lot of good for Bruce Mouat. He started playing a video game called Granite when he was only 8. It is a Curling game. As an adult he leads the GB Curling team in the Olympics and is one of the best players in the world, having multiple world gold medals.

Curling is a sport requiring both physical skill, strategy and nerve. It involves sliding polished stones of super hard granite down an ice track. The aim is to try and land your stones nearest the centre of a series of concentric circles 40 or so metres away. Despite the distance, millimetre accuracy is often needed. It is also called curling because the stones do not run in a straight line but curve their way into the target, in part controlled by sweepers who sweep the ice in front of the stones to alter the curl of the path as well as the distance.

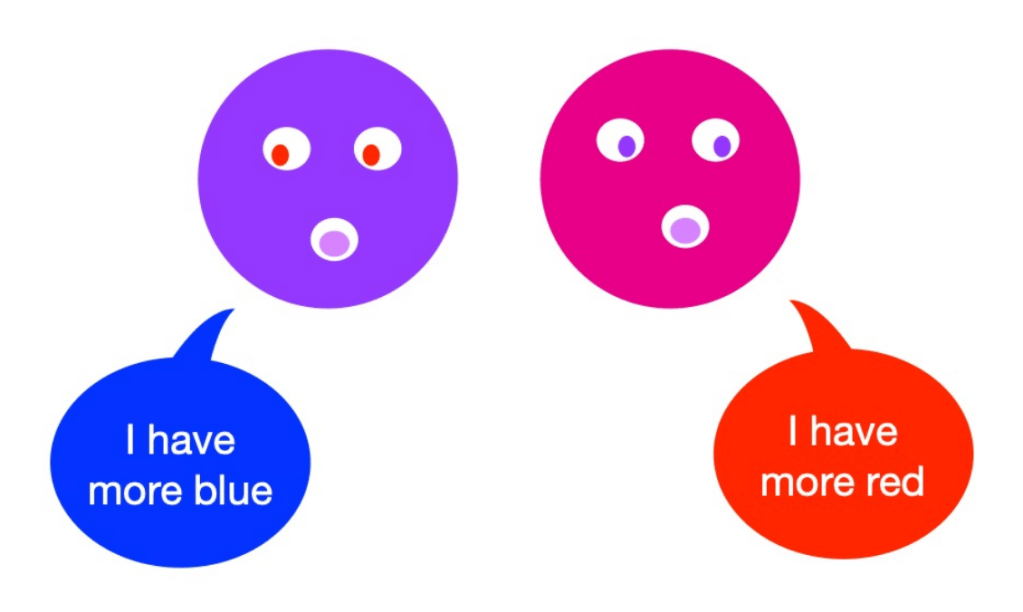

It is an incredibly strategic sport with the players having to think several moves ahead, but then having to put that strategy into practice with their skill. In that way it is very much like Snooker. That is where the video game came in. Obsessed with the video game as a child, he became outstanding at the decision making in the sport by playing the video game against his granny. The reason the video game helped was because it is more than just a game, it can just as well be called a simulation, like a flight simulator. The latter are considered so accurate that pilots train on them to gain the hours needed to become skilful controlling a plane. That is what the video game Granite does for Curling.

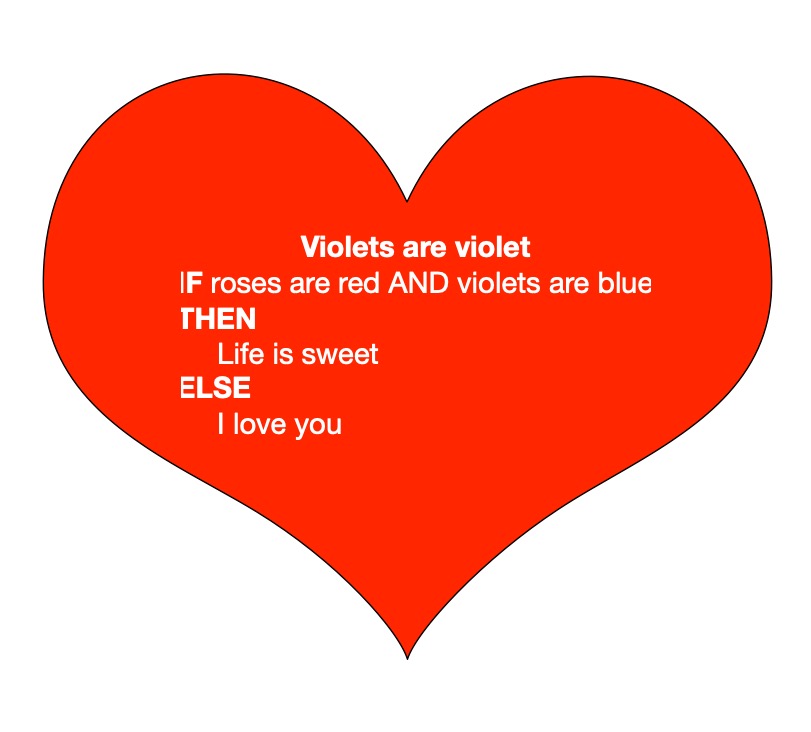

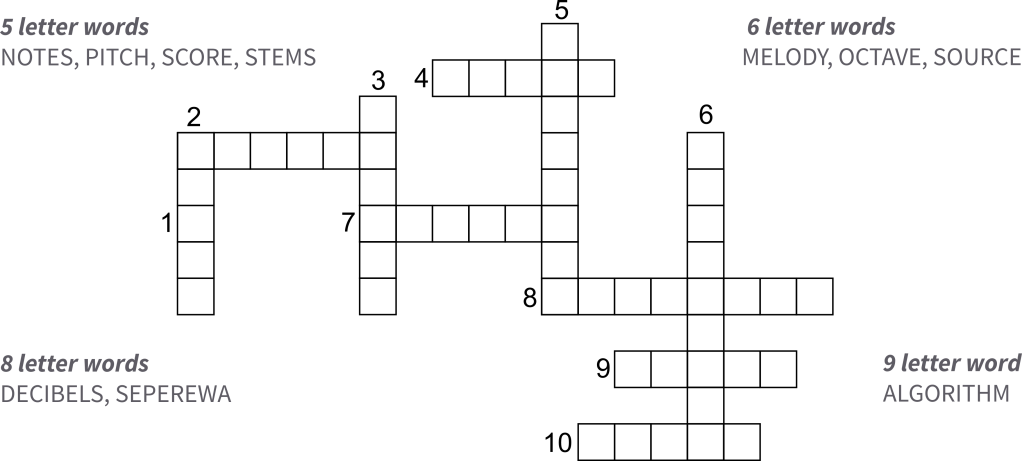

To work as a simulator it needs what is called a physics engine. The programmers have to code the actual physics of polished granite stones sliding on ice and of ice being swept, covering all the small differences made by ice temperature, the speed of the sweeping and so on. The programmers, who were married curlers themselves, made the physics so accurate, that everything in the game behaves as close to possible as it would in reality. That means you can really learn strategy by playing it, even if you then still have to back that by hours of practice playing real curling to gain the physical skill to land stones exactly where you want them. It also can of course help inspire people to start to play the sport in reality.

In the 2026 Olympic final Bruce and Team came away with their second Silver medal, won in consecutive Olympic games, this time beaten by Canada. Even in losing the final match, Bruce Mouat made some absolutely amazing shots, both in terms of strategy and perfect physical skill under extreme pressure.

So playing a video game can certainly be for good: when the physics is so good it acts as a simulator and so can be a way to put in the hours of practice needed to be world leading, but just for fun. So have a go at playing Granite and maybe you will also gain a love of the game and so sport, then one day become a Winter Olympian yourself. Or if you love some other game or sport and learn to program, perhaps you could code a perfect simulator for it to give future players who enjoy your game an edge in the sport.

More on …

Subscribe to be notified whenever we publish a new post to the CS4FN blog.