It’s Day 18 of the CS4FN Christmas Computing Advent Calendar. We’ve been posting a computing-themed article linked to the picture on the ‘front’ of the advent calendar for the last 17 days and today is no exception. The picture is of a Christmas cracker so today’s theme is going to be computer hacking and cracking – all about Cyber Security.

If you’ve missed any of our previous posts, please scroll to the end of this one and click on the Christmas tree for the full list.

The terms ‘cracker’ and ‘hacker’ are often used interchangeably to refer to people who break into computers though generally the word hacker also has a friendlier meaning – someone who uses their skills to find a workaround or a solution (e.g. ‘a clever hack’) whereas a cracker is probably someone who shouldn’t be in your system and is up to no good. Both people can use very similar skills though – one is using them to benefit others, the other to be benefit themselves.

We have an entire issue of the CS4FN magazine all about Cyber Security – it’s issue 24 and is called ‘Keep Out’ but we’ll let you in to read it. All you have to do is click on this very secret link, then click on the magazine’s front cover to download the PDF. But don’t tell anyone else…

Both the articles below were originally published in the magazine as well as on the CS4FN website.

Piracy on the open Wi-fi

by Jane Waite, Queen Mary University of London.

You arrive in your holiday hotel and ask about Wi-Fi. Time to finish off your online game, connect with friends, listen to music, kick back and do whatever is your online thing. Excellent! The hotel Wi-Fi is free and better still you don’t even need one of those huge long codes to access it. Great news, or is it?

You always have to be very cautious around public Wi-Fi whether in hotels or cafes. One common attack is for the bad guys to set up a fake Wi-Fi with a name very similar to the real one. If you connect to it without realising, then everything you do online passes through their computer, including all those user IDs and passwords you send out to services you connect to. Even if the passwords they see are encrypted, they can crack them offline at their leisure.

Things just got more serious. A group has created a way to take over hotel Wi-Fi. In July 2017, the FireEye security team found a nasty bit of code, malware, linked to an email received by a series of hotels. The malware was called GAMEFISH. But this was no game and it certainly had a bad, in fact dangerous, smell! It was a ‘spear phishing’ attack on the hotel’s employees. This is an attack where fake emails try to get you to go to a malware site (phishing), but where the emails appear to be from someone you know and trust.

Once in the hotel network, so inside the security perimeter, the code searched for the machines running the hotel’s Wi- Fi and took them over. Once there they sat and watched, sniffing out passwords from the Wi-Fi traffic: what’s called a man-in-the-middle attack.

The report linked the malware to a very serious team of Russian hackers, called FancyBear (or APT28), who have been associated with high profile attacks on governments across the world. GAMEFISH used a software tool (an ‘exploit’) called EternalBlue, along with some code that compiled their Python scripts locally, to spread the attack. Would you believe, EternalBlue is thought to have been created by the US Government’s National Security Agency (NSA), but leaked by a hacker group! EternalBlue was used in the WannaCry ransomware too. This may all start to sound rather like a farfetched thriller but it is not. This is real! So think before you click to join an unsecured public Wi-Fi.

Just between the two of us: mentalism and covert channels

by Peter W McOwan, Queen Mary University of London.

Secret information should stay secret. Beware ‘covert channels’ though. They are a form of attack where an illegitimate way of transferring information is set up. Stopping information leaking is a bit like stopping water leaking – even the smallest hole can be exploited. Magicians have been using covert channels for centuries, doing mentalism acts that wow audiences with their ‘telepathic’ powers.

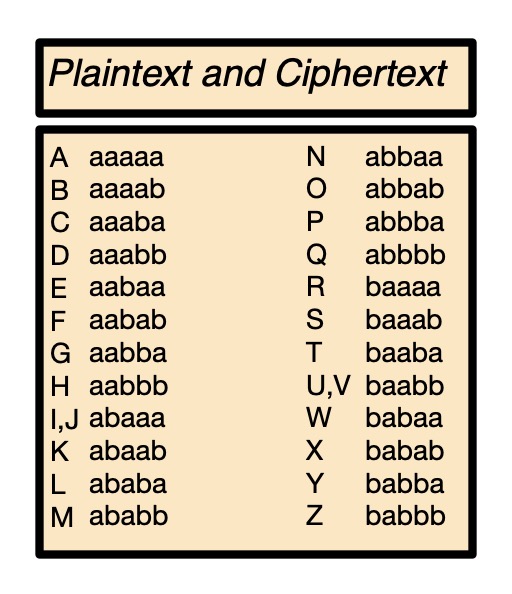

The secret codes of Mentalism

In the 1950’s Australian couple Sydney and Lesley Piddington took the entertainment world by storm. They had the nation perplexed, puzzled and entertained. They were seemingly able to communicate telepathically over great distances. It all started in World War 2 when Sydney was a prisoner of war. To keep up morale, he devised a mentalism act where he ‘read the minds’ of other soldiers. When he later married Lesley they perfected the act and became an overnight sensation, attracting BBC radio audiences of 20 million. They communicated random words and objects selected by the audience, even when Lesley was in a circling aeroplane or Sydney was in a diving bell in a swimming pool. To this day their secret remains unknown, though many have tried to work it out. Perhaps they used a hidden transmitter. After all that was fairly new technology then. Or perhaps they were using their own version of an old mentalism trick: a code to transmit information hidden in plain sight.

Sounds mysterious

Sydney had a severe stutter, and some suggested it was the pauses he made in words rather than the words themselves that conveyed the information. Using timing and silence to code information seems rather odd, but it can be used to great effect.

In the phone trick ‘Call the wizard’, for example, a member of the audience chooses any card from a pack. You then phone your accomplice. When they answer you say “I have a call for the wizard”. Your friend names the card suits: “Clubs … spades … diamonds … hearts”. When they reach the suit of the chosen card you say: “Thanks”.

Your phone friend now knows the suit and starts counting out the values, Ace to King. When they reach the chosen card value you say: “Let me pass you over”. Your accomplice now knows both suit and value so dramatically reveals the card to the person you pass the phone to.

This trick requires a shared understanding of the code words and the silence between them. When combined with the background count, information is passed. The silence is the code.

Timing can similarly be used by a program to communicate covertly out of a secure network. Information might be communicated by the time a message is sent rather than its contents, for example

Codes on the table

Covert channels can be hidden in the existence and placement of things too. Here’s another trick.

The receiving performer leaves the room. A card is chosen from a pack by a volunteer. When the receiver arrives back they are instantly able to tell the audience the name of the card. The secret is in the table. Once the card has been selected, pack and box are replaced on the table. The agreed code might be:

If the box is face up and its flap is closed: Clubs.

If the box is face up and its flap is open: Spades.

If the box is face down and its flap is closed: Diamonds.

If the box is face down and its flap is open: Hearts.

That’s the suits taken care of. Now for the value. The performers agree in advance how to mentally chop up the card table into zones: top, middle and bottom of the table, and far right, right, left and far left. That’s 3 x 4 unique locations. 12 places for 12 values. The pack of cards is placed in the correct pre-agreed position, box face up or not, flap open or closed as needed. What about the 13th possibility? Have the audience member hold their hand out flat and leave the cards on it for them to ‘concentrate’ on.

Again a similar idea can be used as a covert channel to subvert a security system: information might be passed based on whether a particular file exists or not, say.

Making it up as you go along

These are just a couple of examples of the clever ideas mentalists have used to amaze and entertain audiences with feats of seemingly superhuman powers. Our cs4fn mentalism portal has more. Some claim they have the powers for real, but with two dedicated performers and a lot of cunning memory work, it’s often hard to decipher performers’ methods. Covert channels can be similarly hard to spot.

Perhaps the Piddingtons secret was actually a whole range of different methods. Just before she died Lesley Piddington is said to have told her son, “Even if I wanted to tell you how it was done, I don’t think I would be able”. How ever it was done, they were using some form of covert channel to cement their place in magic history. As Sydney said at the end of each show “You be the judge”.

EPSRC supports this blog through research grant EP/W033615/1.