Why can’t you trust what an AI says?

by Paul Curzon, Queen Mary University of London

Chatbots that can answer questions and write things for you are in the news at the moment. These Artificial Intelligence (AI) programs are very good now at writing about all sorts of things from composing songs and stories to answering exam questions. They write very convincingly in a human-like way. However, one of the things about them is that they often get things wrong. Apparently, they make “facts” up or as some have described it “hallucinate”. Why should a computer lie or hallucinate? What is going on? Writing postcards will help us see.

Write a postcard

We can get an idea of what is going on if we go back to one of the very first computer programs that generated writing. It was in the 1950s and written by Christopher Strachey a school teacher turned early programmer. He wrote a love letter writing program but we will look at a similar idea: a postcard writing program.

Postcards typically might have lots of similar sentences, like “Wish you were here” or “The weather is lovely”, “We went to the beach” or “I had my face painted with butterflies”. Another time you might write things like: The weather is beautiful”, “We went to the funfair” or “I had my face painted with rainbows”. Christopher Strachey’s idea was to write a program with template sentences that could be filled in by different words: “The weather is …”, “We went to the …”, “I had my face painted with …”. Then the program picks some sentence templates at random, and then picks words at random to go in their slots. In this way, applied to postcard writing it can write millions of unique postcards. It might generate something like the following, for example (where I’ve bolded the words it filled in):

Dear Gran,

I’m on holiday in Skegness. I’ve had a wonderful time. The weather is sunny, We went to the beach. I had my face painted with rainbows. I’ve eaten lots strawberry ice cream. Wish you were here!

Lots of love from Mo

but the next time you ask it to it will generate something completely different.

Do it yourself

You can do the same thing yourself. Write lots of sentences on strips of card, leaving gaps for words. Give each gap a number label and note whether it is an adjective (like ‘lovely’ or ‘beautiful’) or a noun (like ‘beach’ or ‘funfair’, ‘butterflies’ or ‘rainbows’). You could also have gaps for verbs or adverbs too. Now create separate piles of cards to fit in each gap. Write the number that labels the gap on one side and different possible words of the right kind for that gap on the other side of the cards. Then keep them in numbered piles.

To generate a postcard (the algorithm or steps for you to follow), shuffle the sentence strips and pick three or four at random. Put them on the table in front of you to spell out a message. Next, go to the numbered pile for each gap in turn, shuffle the cards in that pile and then take one at random. Place it in the gap to complete the sentence. Do this for each gap until you have generated a new postcard message. Add who it is to and from at the start and end. You have just followed the steps (the algorithm) that our simple AI program is following.

Making things up

When you write a postcard by following the steps of our AI algorithm, you create sentences for the postcard partly at random. It is not totally random though, because of the templates and because you chose words to write on cards for each pile that make sense there. The words and sentences are about things you could have done – they are possible – but that does not mean you did do them!

The AI makes things up that are untrue but sound convincing because even though it is choosing words at random, they are appropriate and it is fitting them into sentences about things that do happen on holiday. People talk of chatbots ‘hallucinating’ or ‘dreaming’ or ‘lying’ but actually, as here, they are always just making the whole thing up just as we are when following our postcard algorithm. They are just being a little more sophisticated in the way that they invent their reality!

Our simple way of generating postcards is far simpler than modern AIs, but it highlights some of the features of how AIs are built. There are two basic parts to our AI. The template sentences ensure that what is produced is grammatical. They provide a simple ‘language model‘: rules of how to create correct sentences in English that sound like a human would write. It doesn’t write like Yoda :

“Truly wonderful, the beach is.”

though it could with different templates.

The second part is the sets of cards that fit the gaps. They have to fit the holes left in the templates – only nouns in the noun gaps, adjectives in the adjectives gap, and also fit

Given a set of template sentences about what you might do on holiday, the cards provide data to train the AI to say appropriate things. The cards for the face paining noun slot need to be things that might be painted on your face. By providing different cards you would change the possible sentences. The more cards the more variety in the sentences it writes.

AIs also have a language model, the rules of the language and which words go sensibly in which places in a sentence. However, they also are trained on data that gives the possibilities of what is actually written. Rather than a person writing templates and thinking up words it is based on training data such as social media posts or other writing on the Internet and what is being learnt from this data is the likelihood of what words come next, rather than just filling in holes in a template. The language model used by AIs is also actually just based on the likelihood of words appearing in sentences (not actual grammar rules).

What’s the chances of that?

So, the chatbots are based on the likelihood of words appearing and that is based on statistics. What do we mean by that? We can add a simple version of it to our Postcard AI but first we would need to collect data. How often is each face paint design chosen at seaside resorts? How often do people go to funfairs when on holiday. We need statistics about these things.

As it stands any word we add to the stack of cards is just as likely to be used. If we add the card maggots to the face painting pile (perhaps because the face painter does gruesome designs at Halloween) then the chatbot could write

“I had my face painted with maggots”.

and that is just as likely as it writing

“I had my face painted with butterflies”.

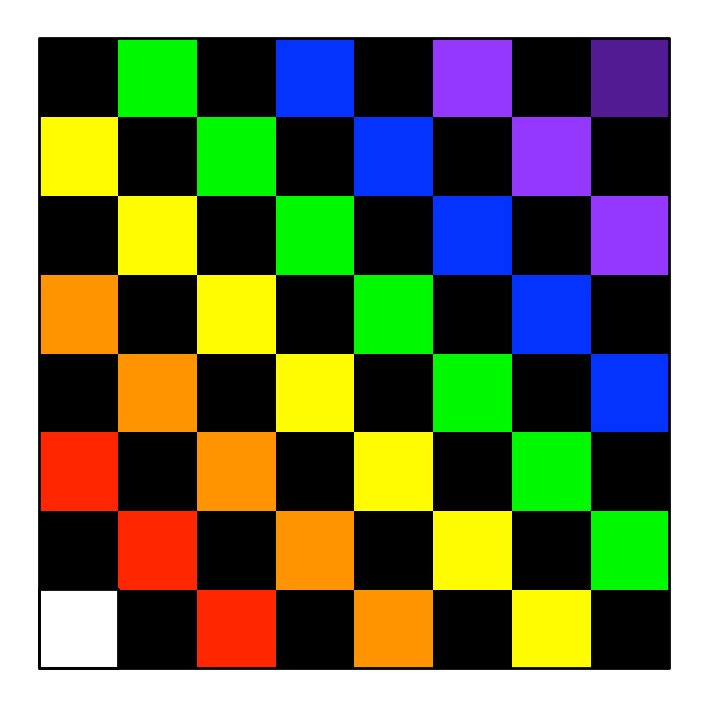

If the word maggots is not written on a card it will never write it. Either it is possible or it isn’t. We could make the chatbot write things that are more realistic, however, by adding more cards of words that are about things that are more popular. So, if in every 100 people having their face painted, almost a third, 30 people choose to have butterflies painted on their face, then we create 30 cards out of 100 in the pack with the word BUTTERFLY on (instead of just 1 card). If 5 in a 100 people choose the rainbow pattern then we add five RAINBOW cards, and so on. Perhaps we would still have one maggot card as every so often someone who likes grossing people out picks it even on holiday. Then, over all the many postcards written this way by our algorithm, the claims will match statistically the reality of what humans would write overall if they did it themselves.

As a result, when you draw a card for a sentence you are now more likely to get a sentence that is true for you. However, it is still more likely to be wrong about you personally than right (you may have had your face painted with butterflies but 70 of the 100 cards still say something else). It is still being chosen by chance and it is only the overall statistics for all people who have their face painted that matches reality not the individual case of what is likely true for you.

Make it personal

How could we make it more likely to be right about you? You need to personalise it. Collect and give it (ie train it on) more information about you personally. Perhaps you usually have a daisy painted on your face because you like daisies (you personally choose a daisy pattern 70% of the time). Sometimes you have rainbows (20% of the time). You might then on a whim choose each of 10 other designs including the butterfly maybe 1 in a hundred times. So you make a pile of 70 DAISY cards, 20 RAINBOW cards and 1 card for each of the other designs, Now, its choices, statistically at least, will match yours. You have trained it about yourself, so it now has a model of you.

You can similarly teach it more about yourself generally, so your likely activities, by adding more cards about the things you enjoy – if you usually choose chocolate or vanilla ice cream then add lots of cards for CHOCOLATE and lots for VANILLA, and so on. The more cards the postcard generator has of a word, the more likely it is to use that word. By giving it more information about yourself, it is more likely to be able to get things about you right. However, it is of course still making it up so, while it is being realistic, on any given occasion it may or may not match reality that time.

Perfect personalisation

You could go a step further and train it on what you actually did do while on this holiday, so that the only cards in the packs are the ones you did actually do on this holiday. (You ate hotdogs and ice cream and chips and … so there are cards for HOTDOG, ICE CREAM, CHIPS …). You had one vanilla ice cream, two chocolate and one strawberry so have that number of each ice cream card. If it knows everything about you then it will be able to write a postcard that is true! That is why companies behind AIs want to collect every detail of your life. The more they know about you the more they get things right about you and so predict what you will do in future too.

Probabilities from the Internet

The modern chatbots work by choosing words at random based on how likely they are in a similar way to our personalised postcard writer. They pick the most likely words to write next based on probabilities of those words coming next in the data they have been trained on. Their training data is often conversations from the Internet. If the word is most likely to come next in all that training data, then the chatbot is more likely to use that word next. However, that doesn’t make the sentence it comes up with definitely true any more than with our postcard AI.

You can personalise the modern AIs too, by giving them more accurate information about yourself and then they are more likely to get what they write about you right. There is still always a chance of them picking the wrong words, if it is there as a possibility though, as they are still just choosing to some extent at random.

Never trust a chatbot

Artificial Intelligences that generate writing do not hallucinate just some of the time. They hallucinate all of the time, just with a big probability of getting it right. They make everything up. When they get things right it is just because the statistics of the data they were trained on made those words the most likely ones to be picked to follow what went before. Just as the Internet is full of false things, an Artificial Intelligence can get things wrong too.

If you use them for anything that matters, always double check that they are telling you the truth.

More on …

Related Magazines …

EPSRC supports this blog through research grant EP/W033615/1.