The music in a toy commercial isn’t just background noise. It tells you who the advert is for, and a machine learning model can hear it (even when you barely notice the difference). Luca Marinelli tells us more.

Next time you’re watching TV, try muting the adverts and then turning the sound back on. You’ll probably notice something odd. The music in adverts for dolls and playsets sounds completely different from the music in adverts for action figures and toy cars. One sounds smooth and tuneful. The other sounds loud and chaotic. But here’s the question: is that just your imagination, or is the difference real and measurable? For my PhD research at Queen Mary University of London I decided to find out using machine learning.

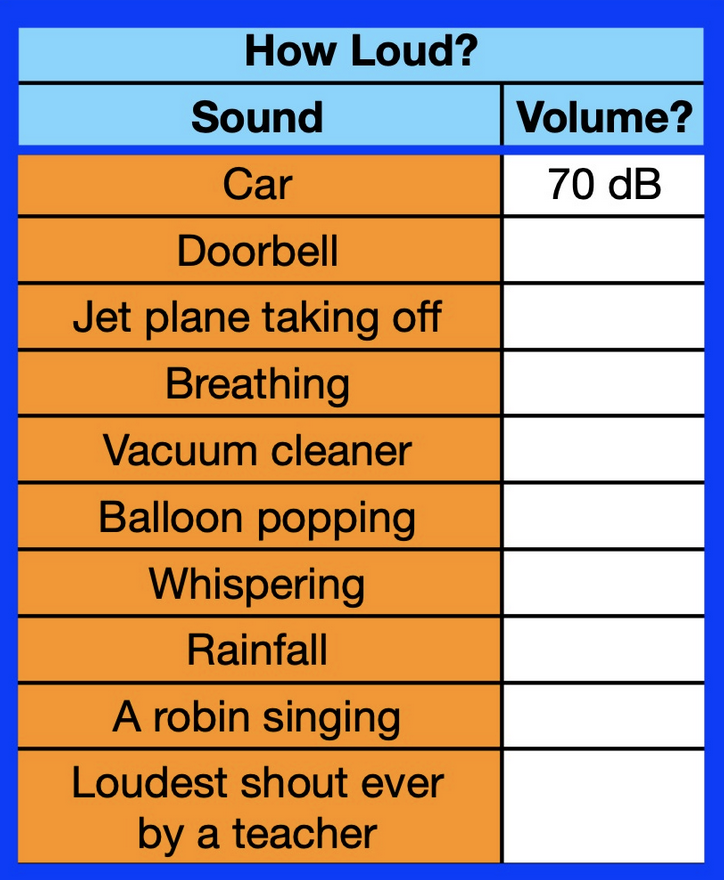

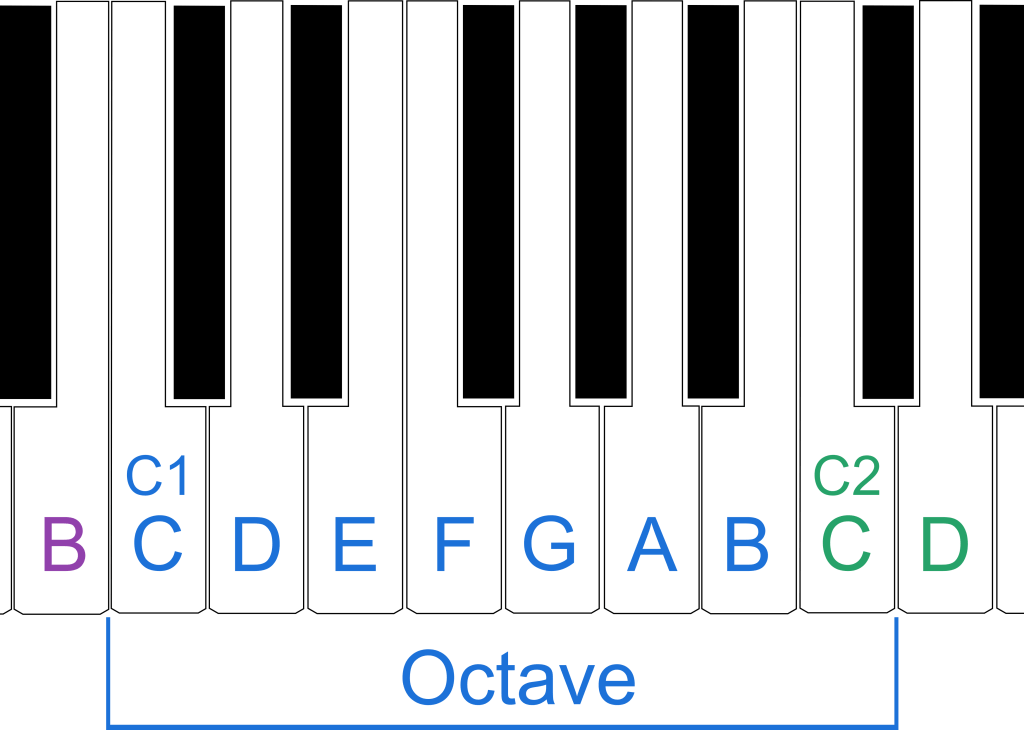

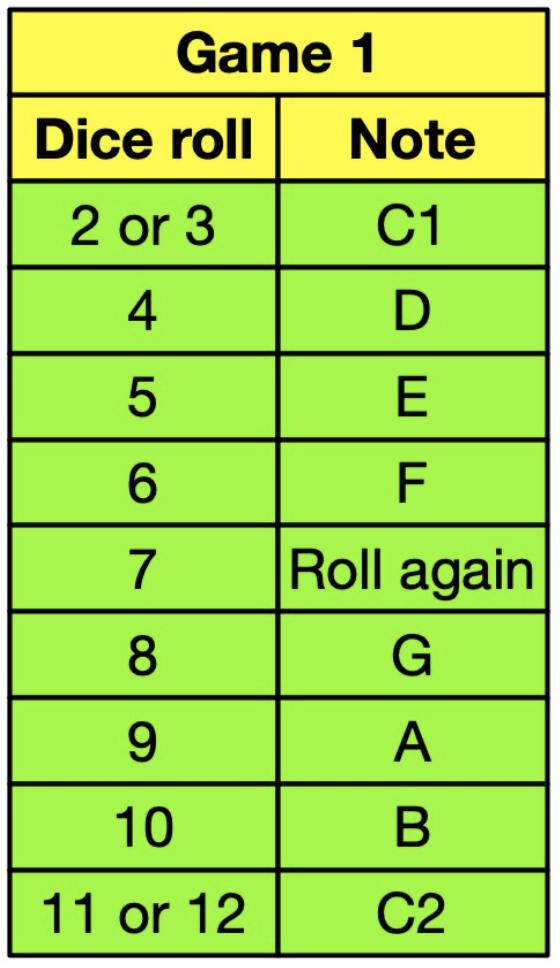

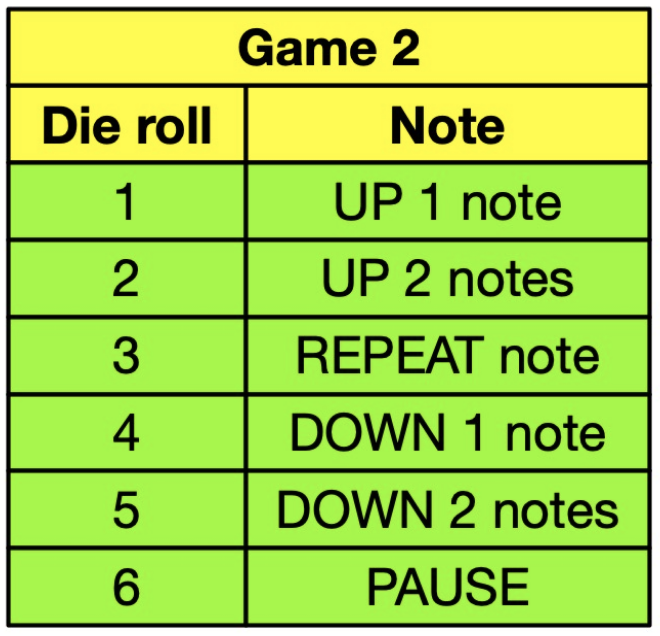

I collected over 600 toy commercials from a UK retailer’s YouTube channel, split into three groups: ads aimed at girls, ads aimed at boys, and ads aimed at mixed audiences. Then I fed the soundtracks into a computer program and had it extract dozens of measurements from each one. Not “does this sound nice?” (computers can’t answer that) but more precise numerical values like “how rough does the sound spectrum look”, “how regular is the beat” or “how clearly does this audio sit in a musical key”. Think of it as turning every piece of music into a long list of numbers that each describe a property of it.

Then I trained a type of machine learning model called a classifier, to look at those numbers and predict: is this intended as a girls’ ad, a boys’ ad, or a mixed one? The classifier got it right a remarkable 91% of the time when comparing girls-only and boys-only ads. That’s not luck. That’s a genuine, detectable pattern hidden in the sound. But which measurements were actually doing the work? This is where the research gets interesting, and where a technique called SHAP (Shapley Additive exPlanations) comes in. SHAP is a way of asking a machine learning model to explain its own decisions. Instead of just getting a yes/no answer, you can ask: “which features pushed you towards saying this was a girls’ ad, and which ones pushed you the other way?” It’s a bit like asking a judge not just for a verdict, but for their full reasoning.

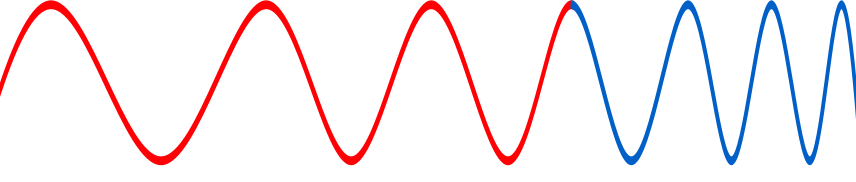

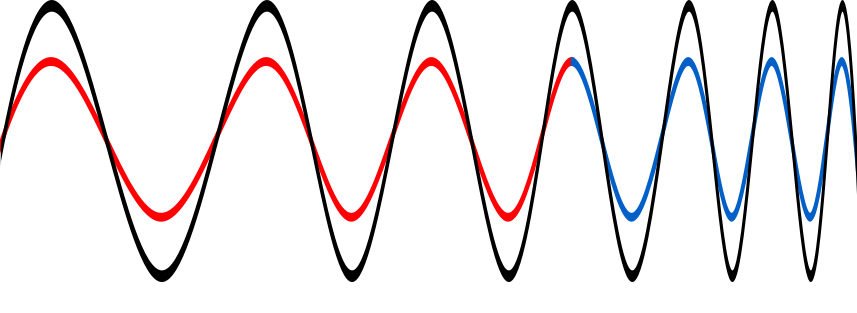

What SHAP revealed was striking. Ads targeting girls consistently had higher harmonicity, meaning the sounds fit together into clear, pleasant musical patterns, and more rhythmic regularity, meaning the beat was steady and predictable. Their audio spectrum (a kind of fingerprint of all the frequencies present) was also broader and smoother. Boys’ ads, by contrast, scored higher on spectral roughness (sounds that are abrasive) and spectral entropy (a measure of how chaotic or unpredictable the sound is). They were also simply louder. In plain terms: girls’ ads sound harmonious and organised. Boys’ ads sound noisy, aggressive, and jagged. And a machine learning model can tell the difference with 91% accuracy just from the audio alone, without seeing a single frame of video. These patterns almost certainly aren’t accidental. Marketers are making deliberate choices about music to signal who a product is “for”. The sound itself carries a hidden message.

We showed how AI can be used to hold up a mirror to human behaviour. When we use explainable AI we can spot patterns in the world that are so familiar we’ve stopped noticing them. The music in a toy advert might seem trivial, but if an algorithm can reliably predict the intended audience just from the soundtrack, that tells us something important: gender stereotypes aren’t just visible, they’re audible too.

Luca Marinelli, Queen Mary University of London

More on …

Getting Technical…

- Read the Research Paper

Subscribe to be notified whenever we publish a new post to the CS4FN blog.