How Foley artists can manipulate natural and synthesised sounds for film, TV and radio

Image by Kabomani-Tapir from Pixabay

Theatre producers, radio directors and film-makers have been trying to create realistic versions of natural sounds for years. Special effects teams break frozen celery stalks to mimic breaking bones, smack coconut shells on hard packed sand to hear horses gallop, rustle cellophane for crackling fire. Famously, in the first Star Wars movie the Wookie sounds are each made up of up to six animal clips combined, including a walrus! Sometimes the special effect people even record the real thing and play it at the right time! (Not a good idea for the breaking bones though!) The person using props to create sounds for radio and film is called a Foley artist, named after the work of Jack Donovan Foley in the 1920’s. Now the Foley artist is drawing on digital technology to get the job done.

Designing sounds

Sound designers have a hard job finding the right sounds. So how about creating sound automatically using algorithms? Synthetic sound! Research into sound creation is a hot topic, not just for special effects but also to help understand how people hear and for use in many other sound based systems. We can create simple sounds fairly easily using musical instruments and synthesisers, but creating sounds from nature, animal sounds and speech is much more complicated.

The approaches used to recognize sounds can be the basis of generating sounds too. You can either try and hand craft a set of rules that describe what makes the sound sound the way it does, or you can write algorithms that work it out for themselves.

Paying patterns attention

One method, developed as a way to automatically generate synthetic sound, is based on looking for patterns in the sounds. Computer scientists often create mathematical models to better understand things, as well as to recognize and generate computer versions of them. The idea is to look at (or here listen to) lots of examples of the thing being studied. As patterns become obvious they also start to identify elements that don’t have much impact. Those features are ignored so the focus stays on the most important parts. In doing this they build up a general model, or view, that describes all possible examples. This skill of ignoring unimportant detail is called abstraction, and if you create a general view, a model of something, this is called generalisation: both important parts of computational thinking. The result is a hand-crafted model for generating that sound.

That’s pretty difficult to do though, so instead computer scientists write algorithms to do it for them. Now, rather than a person trying to work out what is, or is not important, training algorithms work it out using statistical rules. The more data they see, the stronger the pattern that emerges, which is why these approaches are often referred to as ‘Big Data’. They rely on number crunching vast data sets. The learnt pattern is then matched against new data, looking for examples, or as the basis of creating new examples that match the pattern.

The rain in train(ing)

Number crunching based on Big Data isn’t the only way though, sometimes general patterns can be identified from knowledge of the thing being investigated. For example, rain isn’t one sound but is made up of lots of rain drops all doing a similar thing. Natural sounds often have that kind of property. So knowledge of a phenomenon can be used to create a basic model to build a generator around. This is an approach Richard Turner, now at Cambridge University, has pioneered, analysing the statistical properties of natural sounds. By creating a basic model and then gradually tweaking it to match the sound-quality of lots of different natural sounds, his algorithms can learn what natural sounds are like in general. Then, given a specific natural ‘training’ sound, it can generate synthetic versions of that sound by choosing settings that match its features. You could give it a recorded sample of real rain, for example. Then his sound processing algorithms apply a bunch of maths that pull out the important features of that particular sound based on the statistical models. With the critical features identified, and plugged in to his general model, a new sound of any length can then be generated that still matches the statistical pattern of, and so sounds like, the original. Using the model you can create lots of different versions of rain, that all still sound like rain, lots of different campfires, lots of different streams, and so-on.

For now, the celery stalks are still in use, as are the walrus clippings, but it may not be long before film studios completely replace their Foley bag of tricks with computerised solutions like Richard’s. One wookie for 3 minutes and a dawn chorus for 5 please.

Become a Foley Artist with Sonic Pi

You can have a go at being a Foley artist yourself. Sonic Pi is a free live-coding synth for music creation that is both powerful enough for professional musicians, but intended to get beginners into live coding: combining programming with composing to make live music.

It was designed for use with a Raspberry Pi computer, which is a cheap way to get started, though works with other computers too. Its also a great, fun way to start to learn to program.

Play with anything, and everything, you find around the house, junk or otherwise. See what sounds it makes. Record it, and then see what it makes you think of out of context. Build up your own library of sounds, labelling them with things they sound like. Take clips of films, mute the sound and create your own soundscape for them. Store the sound clips and then manipulate them in Sonic Pi, and see if you can use them as the basis of different sounds.

Listen to the example sound clips made with Sonic Pi on their website, then start adapting them to create your own sounds, your own music. What is the most ‘natural sound’ you can find or create using Sonic Pi?

Jane Waite and Paul Curzon, Queen Mary University of London.

More on …

Magazines

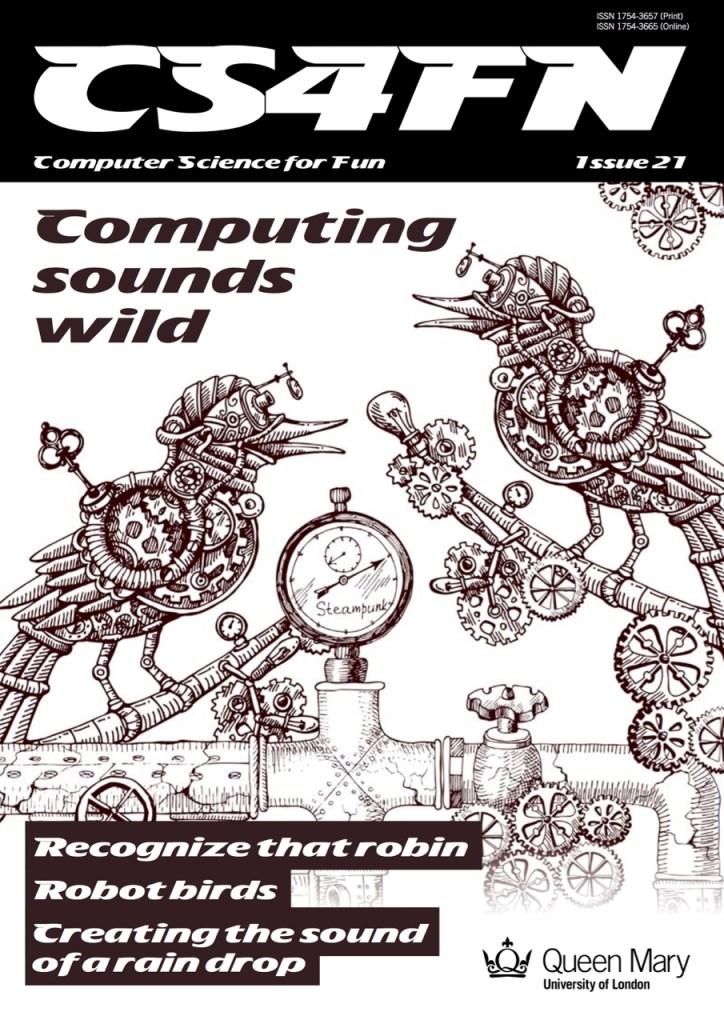

- Issue 21: Computing Sounds Wild

- explores the work of scientists and engineers who are using computers to understand, identify and recreate wild sounds, especially those of birds. We see how sophisticated algorithms that allow machines to learn, can help recognize birds even when they can’t be seen, so helping conservation efforts. We see how computer models help biologists understand animal behaviour, and we look at how electronic and computer generated sounds, having changed music, are now set to change the soundscapes of films. Making electronic sounds is also a great, fun way to become a computer scientist and learn to program.

Subscribe to be notified whenever we publish a new post to the CS4FN blog.

This blog is funded by EPSRC on research agreement EP/W033615/1.

One thought on “A Wookie for three minutes please”