The limits of AI

The advent of AI brings new difficult challenges around understanding how it works, and finding ways to verify Generative AIs and importantly systems they help create, given they can write programs too. How can we make them explainable so we can tell why they came up with the results they did? What are the consequences of training, and what if they are trained on their own outputs? How can they be used to help check programs, whether human or machine built really work?

There is also the question of the limits of such systems. Early logical results contributed to an “AI winter” that halted progress in the 20th century, because people did not understand what the results meant.

Theory is just as important as thinking of and developing new applications, but theory can be used for good and for bad. We need to understand it well for it to help us make practice better.

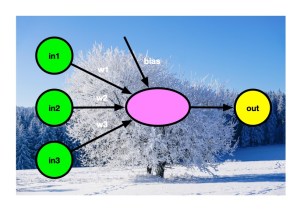

Perceptrons and the AI Winter

A simple, but misunderstood, theoretical result about a little gadget called a perceptron that is now the basis of machine learning tools, led to the AI winter that set back AI development decades. Learn about perceptrons and how theoretical computer science can help us understand AI … (read on)

Subscribe to be notified whenever we publish a new post to the CS4FN blog.